I took part in a really interesting discussion late last week, and into the first part of this week regarding the usefulness of unit testing in the format most of us(?) practice.

To give some context: a problem was discovered whilst preparing a Live deployment. The problem itself was really small: an array being re-instantiated in a conditional, about 5 lines after it had originally been instantiated. That’s a very nerdy way of saying this was happening:

$myArray = ['a'];

if ($something) {

$myArray = [];

}

This is the real world. Stuff happens. We deal with it, we (hopefully) find a way to mitigate it, and we move on.

My process of mitigation was to create a set of unit tests for the file in question. I used my typical approach:

- Cover the default happy path – only mandatory arguments, unit testing a few variations if needed

- Cover the alternative happy path – any optional arguments

- Check for the obvious bad stuff, and assert the mitigation is as expected

I put the code in for review, and got some interesting feedback:

I don’t think Unit testing this class is the way to go… in an ideal world

I am paraphrasing somewhat, but stick with me.

Looking at this made me question everything I do around testing.

I deeply value and trust the opinion of this reviewer, and they are telling me that unit testing a class is not the way to go?

Am I doing unit testing all wrong?

There was a fair bit more to this piece of feedback on this particular PR. The reviewer had been kind enough to offer more detail on their thoughts for this issue.

This person’s preferred approach would be to test the interactions with this class, rather than the class itself.

To test the wider system behaviour, rather than the individual steps.

And this got me to thinking. I’d heard this advice before. I’ve read this advice before. But I started to question if it had sunk in.

Am I wrong to think unit tests add value here?

If unit tests haven’t already been created for this class, is it even worth adding them now?

At some point, can explicitly untested code ever be considered trusted?

I mulled over a bunch of questions like these all weekend.

My Perspective on Unit Testing

As a beginner to a project, my approach when unit testing is to work my way up.

I start with some problem to solve, and I follow that one tiny path from beginning to end, and see what I interact with along the way.

For any class I find, I look for a unit test.

If I find one, I read it.

If one doesn’t exist, I try to create one.

This isn’t always possible, particularly on legacy code.

In that case, one solution might be to hide implementations behind an interface. This way you can A/B any new code you do write, giving you options.

Once I have done this, I create a unit test for the new / revised / alternative implementation.

I keep doing this until I reach the end of the request>response life-cycle.

This causes me to write mostly one type of tests.

I write a lot of unit tests. When I don’t see them, I write them.

I believe this adds value. At the very least, this adds complimentary value.

Another reviewer in the same thread, another very intelligent and smart person whose opinion I valued linked to some related reading. An Uncle Bob article, in particular.

Another reviewer in the same thread, another very intelligent and smart person whose opinion I valued linked to some related reading. An Uncle Bob article, in particular.

I read that article twice, in full.

And I didn’t understand it.

Specifically, I didn’t understand this bit:

As the tests get more specific, the production code gets more generic.

This article takes the point of view that if you’re typically src and test files look something like:

- MyImportantConcreteThing.php

- MyImportantConcreteThingTest.php

That your tests are highly coupled to your production code. Which makes refactoring – true refactoring – inherently more difficult.

I am super guilty of calling any changes to my code refactoring. It sounds very official. Sorry, I can’t come to the pub, I’m refactoring.

Refactoring is defined as a sequence of small changes that keep the tests passing at all times

If the unit tests are tightly coupled to your implementation, it’s highly likely that small changes to your code break, comparatively, a lot of tests.

Keeping the test suite up to date becomes a chore, and is soon sacrificed when project managers push for constant changes. The rot sets in.

What I Learned

Look for behaviour. Then test that behaviour.

I agreed with this approach already.

My perspective of what constitutes behaviour is where I have been asking myself the most questions.

I feel I needed to understand the behaviour of that one class. As an outsider looking in, I found value in this approach.

I’ve learned to question the correct layer in which adding a test, or set of tests, gives the most benefit.

It may be that your problem is solved by an integration test suite. On larger projects, this test suite may not even be in the same language you’re working in. This presents different challenges.

Tools like Behat, and PHPSpec have led me down some paths that have been encouraging me to work like Uncle Bob, without even realising it.

I’ve also learned that I still have a lot to learn about unit testing. That’s a great thing. I have ordered Martin Fowler’s Refactoring book to better inform myself of what Refactoring is truly supposed to be about.

There are some interesting links I’d like to share with you this week around this subject:

And this talk:

I’d love to hear your opinions on this topic, too.

Video Update

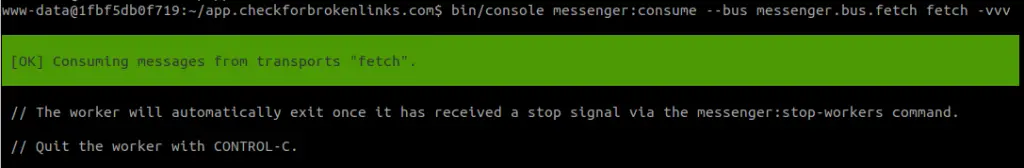

This week saw three new videos added to the site.

This is something a little different. Members only. Enjoy.

I like to run my unit test suite a lot whilst I’m developing. Tools like Facebook’s Jest have spoiled me. I want my unit tests to run automatically whenever I make a change to my code, or my tests.

If you’re like me, too lazy to keep hitting that damn up-arrow key, then this solution may be great for you.

There’s just one caveat: you need to be using Linux.

There is a chance this might work if using Windows Linux Subsystem (or whatever name it has). If you try it on Windows, please let me know if it works. Or you could always use Linux, the best OS.

We wrapped up the API Platform portion of the Beginners Guide to Back End (JSON API) + Front End Development [2018] series.

This involved looking at the output when things go wrong. And capturing this data in our Behat tests.

Happy Days

Ok fans of Fonzie that I know you are, I’m going to wish you glad tidings for the weekend.

Ok fans of Fonzie that I know you are, I’m going to wish you glad tidings for the weekend.

I’m looking forwards to next week, where I’m hoping to get 2 solid days of recording on the Live Stream project.

Oh by the way, I know it’s not a true / proper Live Stream. That’s coming, I just haven’t had time to figure out how to set it up.

Until next week, have a great weekend, and happy coding.

Chris

Ok fans of Fonzie that I know you are, I’m going to wish you glad tidings for the weekend.

Ok fans of Fonzie that I know you are, I’m going to wish you glad tidings for the weekend.