Perhaps “how I fixed” is a poor title for this one. I don’t think I fixed it, but I found a workaround.

Here’s the gist of the problem:

docker run --rm \

--env-file /path/to/my/terraform/azure/.env \

-v /path/to/my/terraform/azure:/workspace \

-w /workspace \

my-custom/terraform:local \

apply --auto-approve

╷

│ Error: The number of path segments is not divisible by 2 in ""

│

│ with azurerm_linux_virtual_machine.christest,

│ on create-instance.tf line 1, in resource "azurerm_linux_virtual_machine" "christest":

│ 1: resource "azurerm_linux_virtual_machine" "christest" {

│

╵

╷

│ Error: The number of path segments is not divisible by 2 in ""

│

│ with azurerm_linux_virtual_machine.christest,

│ on create-instance.tf line 1, in resource "azurerm_linux_virtual_machine" "christest":

│ 1: resource "azurerm_linux_virtual_machine" "christest" {

│

╵

╷

│ Error: The number of path segments is not divisible by 2 in ""

│

│ with azurerm_linux_virtual_machine.christest,

│ on create-instance.tf line 1, in resource "azurerm_linux_virtual_machine" "christest":

│ 1: resource "azurerm_linux_virtual_machine" "christest" {

│

Some extra info that may / may not be helpful in this particular instance is that I wanted to run Terraform through Docker. In order to work with Azure command line (az) I had to bake that into the Dockerfile

FROM hashicorp/terraform:1.0.10

RUN \

apk update && \

apk add bash py-pip && \

apk add --virtual=build gcc libffi-dev musl-dev openssl-dev python3-dev make && \

python3 -m pip install --upgrade pip && \

python3 -m pip install azure-cli && \

apk del --purge buildTo build the Dockerfile I then do a docker build -t my-custom/terraform:local . which is where that custom Docker image is coming from above. Names changed to protect the innocent.

Anyway, I have a bunch of files in this project mainly to split things out for the sake of my sanity. Where Terraform seemed to die was with this first file:

resource "azurerm_linux_virtual_machine" "christest" {

name = "${var.owner}-vm"

resource_group_name = azurerm_resource_group.christest.name

location = azurerm_resource_group.christest.location

size = var.instance_size

admin_username = "adminuser"

admin_password = "abadpassword"

disable_password_authentication = false

network_interface_ids = [

azurerm_network_interface.christest.id,

]

source_image_id = var.source_image_id

os_disk {

storage_account_type = "Standard_LRS"

caching = "ReadWrite"

}

}By and large, I’d simply copied this from the docs and then tried to be a smart arse and turned some of the things into variables.

Here’s where things got confusing.

As above, the Terraform output complains that:

Error: The number of path segments is not divisible by 2 in ""

This error repeats three times.

Hmmm. Three times… well, wait. Don’t I have three variables here, right at the top? Probably them, right?

No. No matter what I did – and it got to the point where I hardcoded them – the error remained. If it remained when they were just plain old strings, there was no way it was these lines causing the problem.

So, I dutifully copy / pasted the entire Azure config in from the docs, and lo-and-behold, that worked first time. D’oh.

What else had I changed?

source_image_id = var.source_image_id

And the associated variable I’d created:

# az vm image list --output table

variable "source_image_id" {

description = "The ID of the Image which this Virtual Machine should be created from"

type = string

default = "Canonical:UbuntuServer:18.04-LTS:latest"

}That’s not how it’s set in the example from the docs. Here’s what they have:

source_image_reference {

publisher = "Canonical"

offer = "UbuntuServer"

sku = "18.04-LTS"

version = "latest"

}Annoyingly, I didn’t even need this set as a variable. I’d just tried to be that aforementioned smart arse, which had bitten me on said arse.

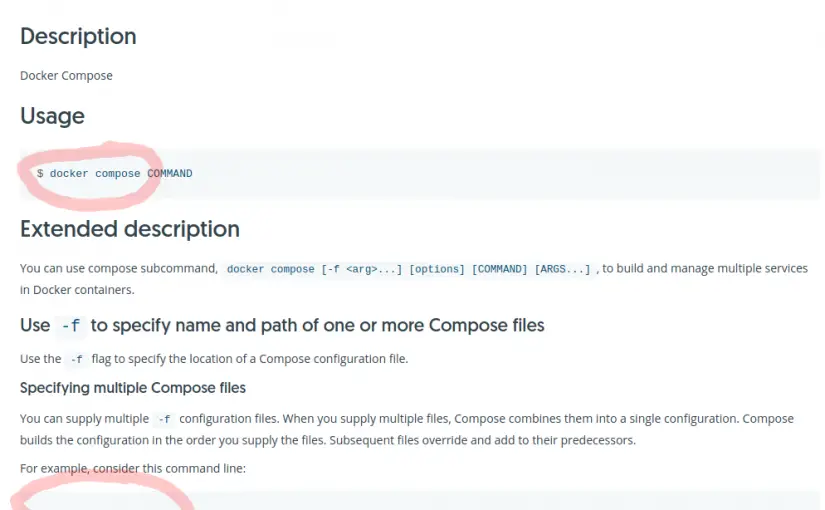

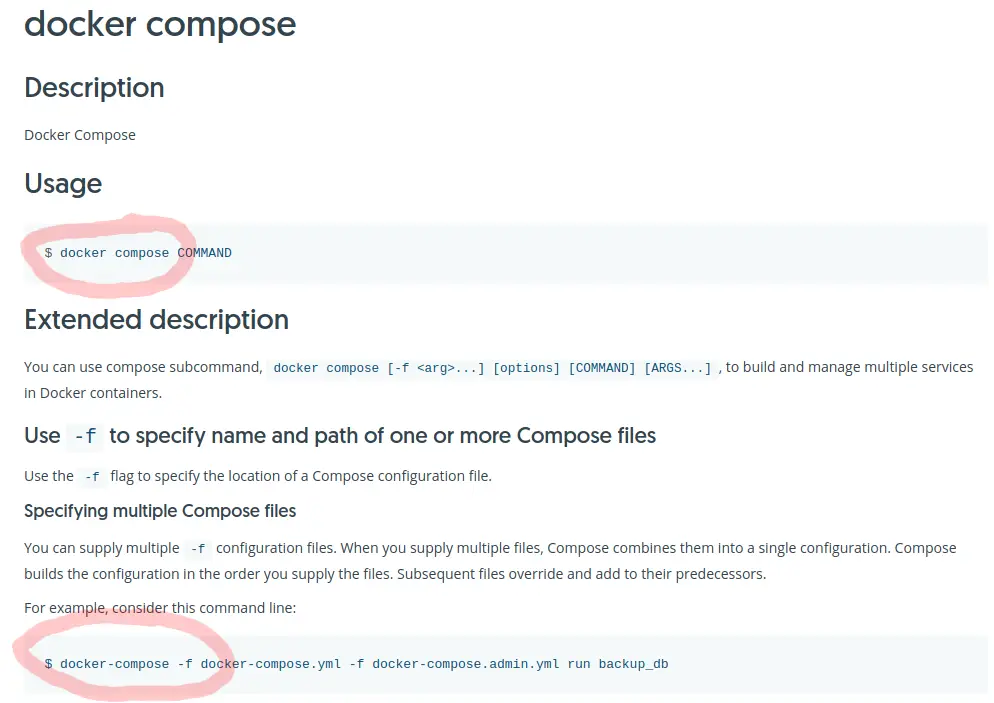

There isn’t an example in the docs of how to use source_image_id, I’d just guessed. Wrongly, it seems.

And why I say I haven’t fixed this is I still don’t know the right format to use here. I just know that by using source_image_reference then the error goes away. Good enough for me.