Not a fun way to start a Saturday morning. With a bit of spare time this morning I wanted to continue some refactoring work on a tool I’ve been working on for checking broken links on any given website.

The project is quite cool (in my opinion), using a bunch of interesting software / tech such as RabbitMQ with Symfony’s Messenger component, STOMP for real time stuff, React with Hooks, Tailwinds for CSS… and a bunch more buzz-wordy, CV helping stuff that keeps me gainfully employed.

Anyway, the first thing I did was spin up the Symfony docker containers that run the various services to handle incoming broken link checking requests. And as ever, I ran a composer update to bring Symfony up to 4.3.x.

I’m not sure if bumping up to Symfony 4.3 was the cause of this problem. I suspect not. It’s been a while since I’ve worked on this part of the code, but it was all working the last time I brought the project up. And it’s working live and online, too, so something has gone awry.

Anyway, after the composer update completed successfully:

composer update

Loading composer repositories with package information

Updating dependencies (including require-dev)

Prefetching 49 packages 🎶 💨

- Downloading (100%)

Package operations: 7 installs, 42 updates, 1 removal

- Removing symfony/contracts (v1.0.2)

- Updating symfony/flex (v1.2.3 => v1.2.5): Loading from cache

- Installing symfony/service-contracts (v1.1.2): Loading from cache

- Installing symfony/polyfill-php73 (v1.11.0): Loading from cache

- Updating symfony/console (v4.2.8 => v4.3.0): Loading from cache

- Installing symfony/event-dispatcher-contracts (v1.1.1): Loading from cache

- Updating symfony/event-dispatcher (v4.2.8 => v4.3.0): Loading from cache

- Updating symfony/css-selector (v4.2.8 => v4.3.0): Loading from cache

- Updating symfony/dom-crawler (v4.2.8 => v4.3.0): Loading from cache

- Updating symfony/messenger (v4.2.8 => v4.3.0): Loading from cache

- Updating symfony/process (v4.2.8 => v4.3.0): Loading from cache

- Updating symfony/serializer (v4.2.8 => v4.3.0): Loading from cache

- Updating symfony/routing (v4.2.8 => v4.3.0): Loading from cache

- Updating symfony/finder (v4.2.8 => v4.3.0): Loading from cache

- Updating symfony/filesystem (v4.2.8 => v4.3.0): Loading from cache

- Updating symfony/debug (v4.2.8 => v4.3.0): Loading from cache

- Installing symfony/polyfill-intl-idn (v1.11.0): Loading from cache

- Installing symfony/mime (v4.3.0): Loading from cache

- Updating symfony/http-foundation (v4.2.8 => v4.3.0): Loading from cache

- Updating symfony/http-kernel (v4.2.8 => v4.3.0): Loading from cache

- Updating symfony/dependency-injection (v4.2.8 => v4.3.0): Loading from cache

- Updating symfony/config (v4.2.8 => v4.3.0): Loading from cache

- Updating symfony/var-exporter (v4.2.8 => v4.3.0): Loading from cache

- Installing symfony/cache-contracts (v1.1.1): Loading from cache

- Updating symfony/cache (v4.2.8 => v4.3.0): Loading from cache

- Updating symfony/framework-bundle (v4.2.8 => v4.3.0): Loading from cache

- Installing symfony/translation-contracts (v1.1.2): Loading from cache

- Updating symfony/validator (v4.2.8 => v4.3.0): Loading from cache

- Updating symfony/yaml (v4.2.8 => v4.3.0): Loading from cache

- Updating nikic/php-parser (v4.2.1 => v4.2.2): Loading from cache

- Updating symfony/translation (v4.2.8 => v4.3.0): Loading from cache

- Updating nesbot/carbon (2.17.1 => 2.19.0): Loading from cache

- Updating illuminate/contracts (v5.8.15 => v5.8.19): Loading from cache

- Updating illuminate/support (v5.8.15 => v5.8.19): Loading from cache

- Updating symfony/inflector (v4.2.8 => v4.3.0): Loading from cache

- Updating symfony/property-access (v4.2.8 => v4.3.0): Loading from cache

- Updating symfony/property-info (v4.2.8 => v4.3.0): Loading from cache

- Updating symfony/monolog-bridge (v4.2.8 => v4.3.0): Loading from cache

- Updating symfony/dotenv (v4.2.8 => v4.3.0): Loading from cache

- Updating symfony/phpunit-bridge (v4.2.8 => v4.3.0): Loading from cache

- Updating symfony/expression-language (v4.2.8 => v4.3.0): Loading from cache

- Updating symfony/stopwatch (v4.2.8 => v4.3.0): Loading from cache

- Updating composer/xdebug-handler (1.3.2 => 1.3.3): Loading from cache

- Updating symfony/var-dumper (v4.2.8 => v4.3.0): Loading from cache

- Updating twig/twig (v2.9.0 => v2.11.0): Loading from cache

- Updating symfony/twig-bridge (v4.2.8 => v4.3.0): Loading from cache

- Updating symfony/debug-bundle (v4.2.8 => v4.3.0): Loading from cache

- Updating symfony/twig-bundle (v4.2.8 => v4.3.0): Loading from cache

- Updating symfony/web-profiler-bundle (v4.2.8 => v4.3.0): Loading from cache

- Updating roave/security-advisories (dev-master 1dfa887 => dev-master 4c0ba8a)

Writing lock file

Generating autoload files

ocramius/package-versions: Generating version class...

ocramius/package-versions: ...done generating version class

What about running composer global require symfony/thanks && composer thanks now?

This will spread some 💖 by sending a ★ to the GitHub repositories of your fellow package maintainers.

Executing script cache:clear [OK]

Executing script assets:install public [OK]I tried to run my messenger consumer:

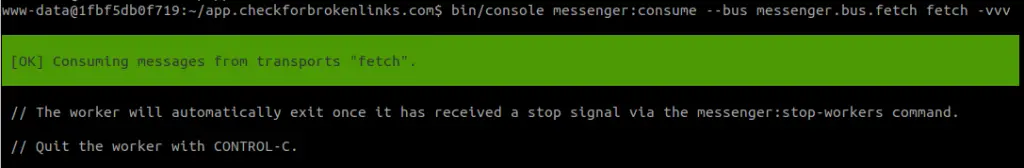

www-data@1fbf5db0f719:~/app.checkforbrokenlinks.com$ bin/console messenger:consume --bus messenger.bus.fetch fetch -vvv

[OK] Consuming messages from transports "fetch".

// The worker will automatically exit once it has received a stop signal via the messenger:stop-workers command.

// Quit the worker with CONTROL-C.

In AmqpReceiver.php line 56:

[Symfony\Component\Messenger\Exception\TransportException]

Server channel error: 406, message: PRECONDITION_FAILED - inequivalent arg 'type' for exchange 'fetch' in vhost '/': received 'fanout' but current is 'di

rect'

Exception trace:

() at /var/www/app.checkforbrokenlinks.com/vendor/symfony/messenger/Transport/AmqpExt/AmqpReceiver.php:56

Symfony\Component\Messenger\Transport\AmqpExt\AmqpReceiver->getEnvelope() at /var/www/app.checkforbrokenlinks.com/vendor/symfony/messenger/Transport/AmqpExt/AmqpReceiver.php:47

Symfony\Component\Messenger\Transport\AmqpExt\AmqpReceiver->get() at /var/www/app.checkforbrokenlinks.com/vendor/symfony/messenger/Worker.php:92

Symfony\Component\Messenger\Worker->run() at /var/www/app.checkforbrokenlinks.com/vendor/symfony/messenger/Worker/StopWhenRestartSignalIsReceived.php:54

Symfony\Component\Messenger\Worker\StopWhenRestartSignalIsReceived->run() at /var/www/app.checkforbrokenlinks.com/vendor/symfony/messenger/Command/ConsumeMessagesCommand.php:224

Symfony\Component\Messenger\Command\ConsumeMessagesCommand->execute() at /var/www/app.checkforbrokenlinks.com/vendor/symfony/console/Command/Command.php:255

Symfony\Component\Console\Command\Command->run() at /var/www/app.checkforbrokenlinks.com/vendor/symfony/console/Application.php:939

Symfony\Component\Console\Application->doRunCommand() at /var/www/app.checkforbrokenlinks.com/vendor/symfony/framework-bundle/Console/Application.php:87

Symfony\Bundle\FrameworkBundle\Console\Application->doRunCommand() at /var/www/app.checkforbrokenlinks.com/vendor/symfony/console/Application.php:273

Symfony\Component\Console\Application->doRun() at /var/www/app.checkforbrokenlinks.com/vendor/symfony/framework-bundle/Console/Application.php:73

Symfony\Bundle\FrameworkBundle\Console\Application->doRun() at /var/www/app.checkforbrokenlinks.com/vendor/symfony/console/Application.php:149

Symfony\Component\Console\Application->run() at /var/www/app.checkforbrokenlinks.com/bin/console:39

In Connection.php line 348:

[AMQPExchangeException (406)]

Server channel error: 406, message: PRECONDITION_FAILED - inequivalent arg 'type' for exchange 'fetch' in vhost '/': received 'fanout' but current is 'di

rect'

Exception trace:

() at /var/www/app.checkforbrokenlinks.com/vendor/symfony/messenger/Transport/AmqpExt/Connection.php:348

AMQPExchange->declareExchange() at /var/www/app.checkforbrokenlinks.com/vendor/symfony/messenger/Transport/AmqpExt/Connection.php:348

Symfony\Component\Messenger\Transport\AmqpExt\Connection->setup() at /var/www/app.checkforbrokenlinks.com/vendor/symfony/messenger/Transport/AmqpExt/Connection.php:311

Symfony\Component\Messenger\Transport\AmqpExt\Connection->get() at /var/www/app.checkforbrokenlinks.com/vendor/symfony/messenger/Transport/AmqpExt/AmqpReceiver.php:54

Symfony\Component\Messenger\Transport\AmqpExt\AmqpReceiver->getEnvelope() at /var/www/app.checkforbrokenlinks.com/vendor/symfony/messenger/Transport/AmqpExt/AmqpReceiver.php:47

Symfony\Component\Messenger\Transport\AmqpExt\AmqpReceiver->get() at /var/www/app.checkforbrokenlinks.com/vendor/symfony/messenger/Worker.php:92

Symfony\Component\Messenger\Worker->run() at /var/www/app.checkforbrokenlinks.com/vendor/symfony/messenger/Worker/StopWhenRestartSignalIsReceived.php:54

Symfony\Component\Messenger\Worker\StopWhenRestartSignalIsReceived->run() at /var/www/app.checkforbrokenlinks.com/vendor/symfony/messenger/Command/ConsumeMessagesCommand.php:224

Symfony\Component\Messenger\Command\ConsumeMessagesCommand->execute() at /var/www/app.checkforbrokenlinks.com/vendor/symfony/console/Command/Command.php:255

Symfony\Component\Console\Command\Command->run() at /var/www/app.checkforbrokenlinks.com/vendor/symfony/console/Application.php:939

Symfony\Component\Console\Application->doRunCommand() at /var/www/app.checkforbrokenlinks.com/vendor/symfony/framework-bundle/Console/Application.php:87

Symfony\Bundle\FrameworkBundle\Console\Application->doRunCommand() at /var/www/app.checkforbrokenlinks.com/vendor/symfony/console/Application.php:273

Symfony\Component\Console\Application->doRun() at /var/www/app.checkforbrokenlinks.com/vendor/symfony/framework-bundle/Console/Application.php:73

Symfony\Bundle\FrameworkBundle\Console\Application->doRun() at /var/www/app.checkforbrokenlinks.com/vendor/symfony/console/Application.php:149

Symfony\Component\Console\Application->run() at /var/www/app.checkforbrokenlinks.com/bin/console:39

messenger:consume [-l|--limit LIMIT] [-m|--memory-limit MEMORY-LIMIT] [-t|--time-limit TIME-LIMIT] [--sleep SLEEP] [-b|--bus BUS] [-h|--help] [-q|--quiet] [-v|vv|vvv|--verbose] [-V|--version] [--ansi] [--no-ansi] [-n|--no-interaction] [-e|--env ENV] [--no-debug] [--] <command> [<receivers>...]Knickers. It all blew up quite badly.

There’s a lot of info to process, and without some nice terminal colouring it’s all a bit of a blur.

The interesting line is:

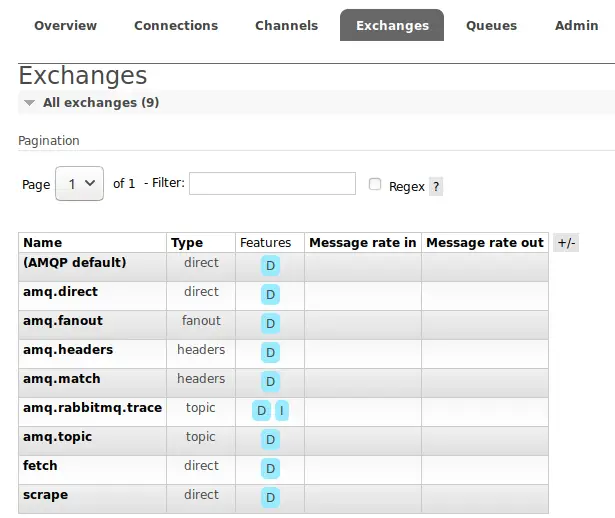

Server channel error: 406, message: PRECONDITION_FAILED - inequivalent arg 'type' for exchange 'my_exchange' in vhost '/': received 'fanout' but current is 'direct'What I think has gone wrong is that at some point in the past, I’ve switched over my RabbitMQ exchange to use direct, and by default, Symfony’s Messenger component will try to create an exchange with the type of fanout.

To clarify, my exchange and queue combo already exists at: amqp://{username}:{password}@rabbitmq:5672/%2f/fetch

It exists because I have previously configured my RabbitMQ instance to boot up with this exchange / queue combo ready and good to go.

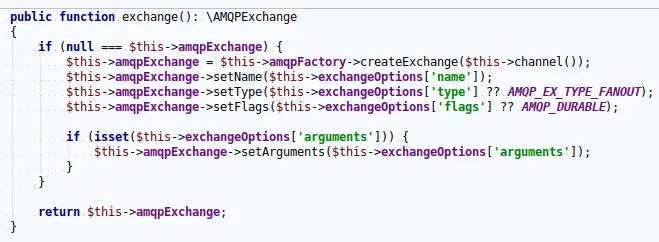

Because Symfony’s Messenger component is not immediately aware that this queue will already exist, it tries to create it.

It cannot create it because the default type of exchange that Symfony’s Messenger component will try to use is fanout.

In order to make this work, I needed to manually specify the config that explicitly sets this exchange / queue combo to the desired setting of direct.

Finding this out via the documentation wasn’t super straightforward. Here’s a few of the steps I took:

bin/console config:dump-reference frameworkThis shows that for each framework.messenger.transports entry in your config/packages/messenger.yaml file, you can have a variety of additional settings.

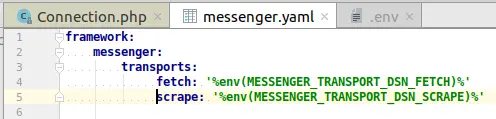

As it was, my original config looked like this:

By providing just a DSN (by way of environment variables), all the default config would be used.

What I needed to do was swap over to this:

framework:

messenger:

transports:

fetch:

dsn: '%env(MESSENGER_TRANSPORT_DSN_FETCH)%'

options:

exchange:

type: 'direct'

scrape:

dsn: '%env(MESSENGER_TRANSPORT_DSN_SCRAPE)%'

options:

exchange:

type: 'direct'And after doing so, it all started working again:

In short, this isn’t directly a Symfony / Symfony Messenger problem. It’s a config problem. The messaging could be a little more clear, as could the documentation for what things are viable as options.